|

Date

|

Topic

|

Reading Response

|

|

1/14

| First day of class: Introduction

|

|

|

1/16

| Secord day of class: Introduction, continued

| Chapter 1

|

|

1/21

| Bandits

| Chapter 2

|

|

1/23

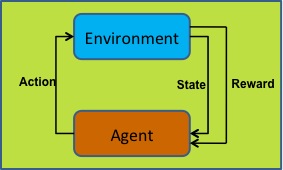

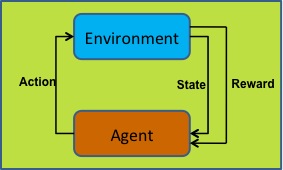

| Reinforcement Learning Problem Definition

| Chapter 3

|

|

1/28

| Dynamic Programming

| Chapter 4

|

|

1/30

| Dynamic Programming

|

|

|

2/4

| Monte Carlo Methods

| Chapter 5

|

|

2/6

| Monte Carlo Methods, Temporal Difference Learning

| Chapter 6: 6.1-6.3

|

|

2/11

| Temporal Difference Learning

| Chapter 6: 6.4-6.10

Sign up for a time to present to the class: http://doodle.com/2p2wz39vebwdzktn

|

|

2/13

| Eligibility Traces

| Chapter 7

|

|

2/18

| Generalization and Function Approximation

| Chapter 8

|

|

2/20

| Generalization and Function Approximation

| IFSA: Incremental Feature-Set Augmentation for Reinforcement Learning Tasks

|

|

2/25

| Planning and Learning

| Chapter 9

|

|

2/27

| Planning and Learning

| Read the R-Max paper, at least through section 3.

|

|

3/4

| IRL, Yusen Presentation/Discussion on Inverse Reinforcement Learning

Matt's later slides

|

|

|

3/6

|

Multi-agent RL, Xiyu Presentation/Discussion,

Matt's later slides

|

|

|

3/11

|

David Presentation/Discussion on Robotics,

Matt's notes

|

|

|

3/13

| Anthony Presentation/Discussion on Multiagent RL

|

|

|

3/25

|

Bei Presentation/Discussion on Learning from Human Rewards

|

|

|

3/27

|

Chris Presentation/Discussion on RL in traffic control,

Matt's notes

|

|

|

4/1

|

Beiyu Presentation/Discussion on hierarchical RL

| Final project proposal due by 3/31 at 3:00pm. Please submit via Angel

|

|

4/3

| Dmitry Presentation/Discussion on RL in SLAM

Two Videos

Matt's Notes

|

|

|

4/8

| Gabe Presentation/Discussion on learning in quadcopters

|

|

|

4/10

| Josh Presentation/Discussion on Game Playing

Discussion on Function Approximation (on board)

|

|

|

4/15

| Transfer Learning

| 4th Exercise due

Read Transfer in Reinforcement Learning: a Framework and a Survey by A. Lazaric and write a response. Read sections 1, 2, 6. Also, read one of section 3, 4, or 5.

Please vote on what's next in the class.

|

|

4/17

| Transfer Learning, continued

|

|

|

4/22

| POMDPs

| Read the tutorial here.

At a minimum, read from "Background on POMDPs" through "General Form of a POMDP solution."

No reading response is required.

|

|

4/24

| Reward Shaping

|

|

|

4/29

| Intrinsic RL

|

|

|

5/1

| 5 min presentations on final project progress

|

|

|

5/2 | Last day to ask Matt questions before he leaves the country | |

|

5/8

|

| Final project due on Angel by 11:59pm

|