|

Date

|

Topic

|

Homework

|

|

1/10

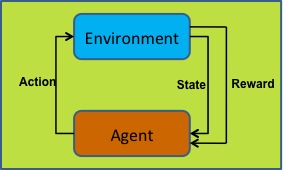

| First day of class: Introduction (with Audio)

|

|

|

1/12

| Second day of class

| Read: Chapter 1

Udacity: Sign up for Machine Learning 3 - Reinforcement Learning

Watch: "Introduction" through "Markov Decision Pocess Four" (8 videos total)

Sign up for Piazza

|

|

1/17

| Bandits!

| Read: Chapter 2

Udacity: More About Rewards (1 & 2) + Rewards Quiz

Please write a response to both on Piazza by 5pm on Monday

|

|

1/19

| MDPs

|

Read up through section 3.5 in the book

Please write a response on Piazza by 5pm on Wednesday

|

|

1/24

| Value functions

| None

|

|

1/26

| V and Q: What's it to you?

| Watch Sequences of Rewards -- Finding Policies 4

Finish reading chapter 3

(Please write a response on Piazza by 11:59pm on Wednesday.)

|

|

1/31

| Value & Policy Iteration

| Watch up through "What have we learned", finishing Part 1 of the course. Read chapter 4 of the book. Please post to piazza by 11:59pm on Monday.

|

|

2/2

| MC Hammer? No! MC Methods

| Read up through 5.3. Please write a response on Piazza by 11:59pm on Wednesday.

|

|

2/7

| No Class

| Read up through chapter 5. Watch "2. Reinforcement Learning Basics": 7 videos. Please write a response to Piazza by 5pm on Monday.

|

|

2/9

| No Class

|

|

|

2/14

| Revenge of the Monte Carlo Methods

|

|

|

2/16

| Temporal Difference Methods

| Watch "3. TD and Friends", Temporal Difference Learning -> Quiz: Selecting Learning Rates (6 videos in total). No response required.

Due by 11:59pm, Saturday 2/18: Homework 1

|

|

2/21

| On vs. Off policy: Q-Learning and Sarsa

| Watch the remainder of "3. TD and Friends".

Read all of chapter 6 in Sutton and Barto.

Please write a respone by 11:59pm, Monday 2/20.

|

|

2/23

| TD(\lambda) and friends

| Read sections 7.1 and 7.2 in book. No response needed.

|

|

2/28

| Eligibility traces

|

Due by 11:59pm, Monday 2/27: Homework 2

|

|

3/2

| Ending Eligibility and Starting State Space Approximation

| Please read chapter 7 in the book.

By midnight Wednesday, please post to piazza with questions/comments regarding this chapter and Tuesday's recorded class.

|

|

3/7

| Function Approximation

| Please read chapter 8 in the book and post a response on Piazza by midnight on Monday the 6th.

|

|

3/9

| Function Approximation in Practice. We also looked at some code

|

|

|

3/14

| No Class

|

|

|

3/16

| No Class

|

|

|

3/21

| Planning and Final Project Discussion

| Due by 11:59pm, Monday 3/20: Homework 3

|

|

3/23

|

| Read Integrating Reinforcement Learning with Human Demonstrations of Varying Ability

Enter your preference on Doodle for when you'd like to present a paper to the class. Ideally it would be something related to your final project. You can present individually, or with 1 other person.

|

|

3/28

| John Jenkins

| By 11:59pm on 3/27, please submit a proposed final project via blackboard. One submission per team of at most 1 page. What will you be doing? How will you evaluate it? What will the success conditions be?

Read: Dynamic Algorithm Selection Using Reinforcement Learning

|

|

3/30

| Yunshu Du, Jessie Waite/Lorin Vandegrift

| Read: Policy Gradient Methods for Reinforcement Learning with Function Approximation and A3C. You may also want to look through this: background information on deepRL

|

|

4/4

| No Class: Please work on your final projects

|

|

|

4/6

| Coby Soss, Yang Zhang

| Read: Model-Based Multi-Objective Reinforcement Learning

|

|

4/11

| Alex Joens, Mark Keen

| Please read Human Interaction for Effective Reinforcement Learning and Integrated Modeling and Control Based on Reinforcement Learning and Dynamic Programming

|

|

4/13

| Lei Cai/Hongyang Gao, Zhengyang Wang/Hao Yuan

| Read: Adversarial Learning for Neural Dialogue Generation and SeqGAN

|

|

4/18

| Niloofar Hezarjaribi/Brandon Yang, Tao Zeng/Yongjun Chen

| Please submit your rough draft of the final project to learn.wsu.edu by 11:59pm on 4/18. The more detail you can give me, the better advice I can give. You can find the rubric for the final project here, as discussed in class.

Please read: Reinforcement Learning in

Continuous State and Action Spaces (please focus on section 2 and section 3.1)

Please read: Active Object Localization with Deep Reinforcement Learning

|

|

4/20

| Yao Zhang, Yan Zhang

| Please read: Bridging the Gap Between Value and Policy Based Reinforcement Learning and Hierarchical Object Detection with Deep Reinforcement Learning

|

|

4/25

| Kayl/Shivam

Advice for Final Project + Shaping, TAMER

| Please read: Power to the People: The Role of Humans in Interactive Machine Learning

|

|

4/27

| Intrinsic Motivation & Options

|

|

|

5/2

| 8am (Time of Exam): Final Presentations

| Please submit your report for the final project to learn.wsu.edu by 11:59pm on 5/2.

|